The Governance Gap: What Harvey Misses

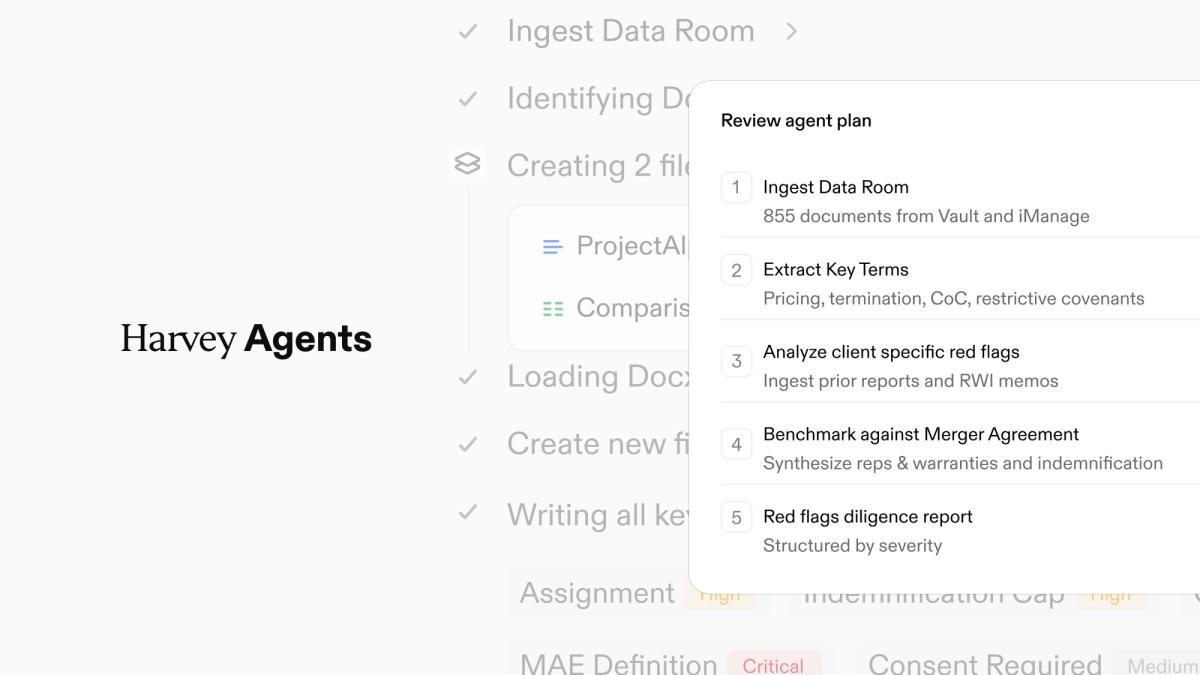

Autonomous agents are changing legal

A post by Gabe Pereyra, Harvey’s co-founder and president, argues that autonomous agents are becoming a governance problem, not just a technical one.

He's right. And he's right that those questions are legal as much as technical. But the piece treats them as a closing thought, a horizon to watch, rather than the engineering problem they already are. Pereyra describes a world where agents operate with real organizational authority, making decisions triggered by incidents, bug reports, and customer feedback. Then he asks where accountability sits, how risk is managed, what governance is required. Those aren't rhetorical questions. They're design specifications.

The gap between what agents can do and what organizations can verify about what agents did is widening every month. Closing it requires more than recognizing that legal teams will define what deployment looks like. It requires building the infrastructure that makes that definition operational.

Legal as the governing function, not just the governed one

Pereyra's interesting claim is that in-house legal teams won't just navigate their own transformation, they'll serve as stewards for how the entire organization deploys agents. Engineering defines capabilities. Legal defines how those capabilities get deployed safely, where accountability sits, what risks are tolerable, and how trust gets earned.

That framing is correct and incomplete. Stewardship implies oversight of something that already exists. What's needed is more foundational: legal has to help design the governance architecture before agents are deployed, not review their behavior after the fact.

Every governance framework we have, compliance, audit, risk management, approval chains, was designed for human actors operating through human coordination structures. Managers review work. Documentation requirements assume someone wrote the document and can explain their reasoning. An agent that processes a customer complaint, drafts a response, identifies a contract issue, escalates it internally, and proposes a resolution has made dozens of judgment calls in minutes. No manager reviewed them. No approval chain was triggered. The reasoning that produced those decisions exists only as transient context that disappears when the session ends.

That's the governance gap. Not whether agents are capable enough, they are. Whether the organization can reconstruct, verify, and audit what the agent actually decided and why. Legal teams that wait to be asked to govern agent deployment will be governing in the dark.

Why agents need decision records, not just audit logs

When an autonomous agent makes a decision inside your organization, can you reconstruct why?

Agent outputs without verifiable decision records are governance theater. You can deploy an agent across your entire legal function, and if you can't produce a tamper-evident record of what it reasoned through, what constraints it operated under, what context it accessed, and what alternatives it considered, you've automated your way into an audit nightmare.

The proof-of-execution problem: the agent equivalent of showing your work. Not the final answer, but the reasoning path, the constraints applied, the context consumed, and the decision points along the way, sealed into a verifiable record that can be audited after the fact. A Certificate of Action, a cryptographic receipt that proves not just what the agent did, but how it got there.

This isn't theoretical. The EU AI Act requires organizations to maintain records of automated decision-making. The SEC has been expanding expectations around algorithmic accountability. Insurance underwriters are starting to ask how companies verify AI-assisted decisions. The demand for proof of execution is arriving from multiple directions simultaneously, and most organizations have no infrastructure to meet it.

Pereyra's Harvey system — Spectre — is triggered by incidents, bug reports, customer feedback, and Slack messages, and makes decisions about what engineering work needs to happen. If it misroutes a critical bug, deprioritizes a security incident, or creates customer harm, the first question any regulator, auditor, or plaintiff's attorney will ask is: show me the decision record. Show me why the system did what it did. Without proof of execution, the answer is "we don't know."

The four-tier governance architecture

Recognizing that agents need governance is the easy part. Building governance that works at agent speed is hard.

Most organizations govern the instruction layer — what the agent is told to do. That's necessary and nowhere near sufficient.

Below that sits context: what information the agent can access. An agent processing a contract negotiation with access to the entire customer database has a different risk profile than one restricted to the specific deal record. Least privilege isn't just a security principle, it's a governance control.

Harder than both is intent: what objectives the agent is optimizing for. An agent tasked with "resolve customer complaints efficiently" will prioritize speed. An agent told to "resolve customer complaints in a way that preserves the customer relationship and complies with our service commitments" makes different decisions. The intent layer shapes every downstream judgment call, and most governance conversations never reach it.

The fourth tier is specification: what the agent can and cannot do, what triggers human review, what outputs require verification, and what records the agent must create about its own decisions. This is where proof of execution lives.

This is where Pereyra's insight about legal defining the bounds of trustworthy deployment becomes operational. Legal teams aren't just reviewing agent outputs; they're defining the intent parameters, the context boundaries, and the specification requirements that shape what agents can do.

Context: exposure and advantage

Agents are context consumers. Every decision an agent makes depends on the data, the history, the constraints, and the relationships it can access. This creates two problems organizations aren't addressing.

The first is security. An agent with access to customer records, contract terms, internal communications, and financial data is a single point of compromise that spans your entire information architecture. When Pereyra describes Spectre monitoring the company and making decisions, that implies broad context access across multiple systems. The governance question isn't just what Spectre decides, it's what Spectre can see.

The second is strategic. The organization that structures its context layer well — clean, accessible, well-organized contextual data about its operations — will get dramatically more value from agents than one drowning in unstructured information. Structured context isn't just an operational asset. It's a platform layer that compounds in value as agents get more capable.

Legal defines the context boundaries. What data can agents access? Under what conditions? With what restrictions? How do you maintain privilege when an agent is processing legal matter data alongside operational data? These are architectural decisions that need to be made before agents are deployed, not after.

The Trust Council: governance at organizational speed

Pereyra says in-house teams will fundamentally define the bounds of trustworthy deployment. That's right. But you can't do it with quarterly review cycles. Agents make thousands of decisions per day. By the time a governance committee reviews an agent's behavior, the agent has already made ten thousand more decisions using the same patterns.

What's needed is a new organizational mechanism — what I've been calling the Trust Council. A cross-functional body (legal, engineering, product, security) that operates at something closer to agent speed: setting policies that are machine-readable, monitoring agent behavior in near-real-time, adjusting constraints dynamically based on observed outcomes.

The Trust Council doesn't review individual agent decisions. It governs the framework the agent operates within, setting intent parameters, defining context boundaries, specifying proof-of-execution requirements, and monitoring for drift. When an agent starts making decisions that diverge from organizational intent, the Trust Council adjusts the framework.

Pereyra's right that legal will determine whether this technology goes well for society. The Trust Council is one answer to how.

The real leverage equation

Pereyra concludes with this: as early and essential adopters, law firms and in-house teams will define what trustworthy adoption looks like, including where accountability resides, what risks are acceptable, what governance is necessary, and what it means to rely on an autonomous system within a real institution.

He has identified the right stakes. But defining trustworthy adoption requires proof-of-execution infrastructure so organizations can verify what agents actually did. Governance architectures need to operate at agent speed, not quarterly review speed. Legal teams should stop thinking of themselves as downstream reviewers and start defining the context boundaries and trust mechanisms that make autonomous agents governable in the first place.

Engineering is ahead of governance right now. That's natural, capability comes before controls. The window for "figure it out later" is closing. The first major incident involving an autonomous agent making unsupervised decisions inside a real institution will change the conversation overnight.

Organizations that adapt well won't be those with the most agents, but those that have built the infrastructure to verify what their agents actually do. Pereyra is right that legal limits define the boundaries. Now, we need to develop the architecture that makes those boundaries enforceable.