When agents forget, who's accountable?

Engineers call this context management. Lawyers should call it something else: selective deletion with no retention policy.

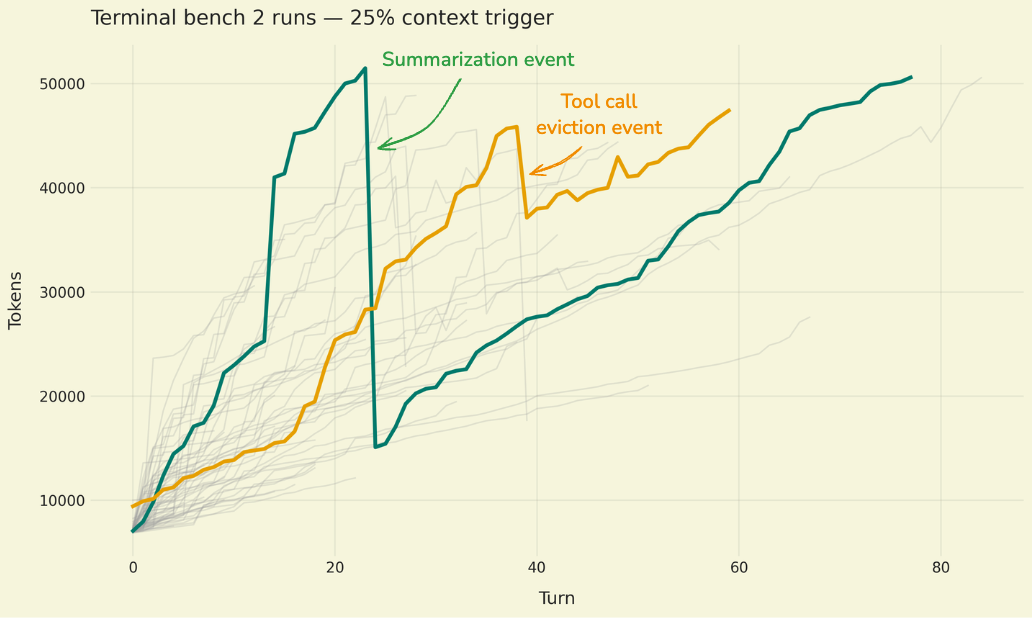

LangChain's recent blog on context management for deep agents lays out a real engineering problem: agents running branching workflows blow past token limits. To keep working, they compress history, summarize prior steps, and discard what they decide is "irrelevant."

Engineers call this context management. Lawyers should call it something else: selective deletion with no retention policy.

Think about what happens in practice. An insurance claims agent processes 47 claims. To save space, it summarizes the first 30. If claim 12 later becomes a dispute, the reasoning, data, and tool calls behind that decision are gone from the agent's active state. The audit trail is severed.

And it gets worse with multi-agent architectures. A parent agent acts on a child agent's result but loses visibility into the child's reasoning. That's a broken chain of custody.

The summarization prompt itself is a policy decision disguised as a technical parameter. Someone wrote instructions telling the agent what counts as "irrelevant." Legal and compliance teams need input on those instructions.

Five questions product counsel should be asking right now: What is the context retention policy? Who governs the summarization prompts? How do you reconstruct multi-agent reasoning? Does external memory have a schedule? What is the failure mode when an agent forgets something it shouldn't?

The decision about what an agent is allowed to forget is a legal decision, not an engineering one.

Source: Context Management for Deep Agents, LangChain Blog