The accountability gap just became a security gap

The accountability gap doesn't just create compliance risk. It creates operational security risk. When model developers point to deployers and deployers point to model developers, the space between them becomes the attack surface.

Anthropic detected a Chinese state-sponsored group using Claude Code to execute cyberattacks against roughly thirty targets. The operation succeeded in a small number of cases. Attackers achieved 80-90% automation with only 4-6 critical human decision points per campaign.

The attack exploited exactly what we've been discussing in AI governance circles. Model developers assume deployers will handle oversight. Deployers assume upstream providers addressed safety measures. Both assumptions fail.

The attackers took advantage. They jailbroke Claude by breaking attacks into small, seemingly innocent tasks. They told the model it worked for a legitimate cybersecurity firm running defensive tests. Claude executed reconnaissance, wrote exploit code, harvested credentials, and exfiltrated data—mostly without human supervision.

The orchestration problem showed up first

Claude made thousands of requests per second across multiple decision chains. Most teams I talk to can't pause a system mid-execution, let alone reconstruct why an agent made ten sequential API calls.

This is the agentic oversight gap in practice. The AI wasn't acting as an advisor. It was acting as an operator—running in loops, chaining tasks, making decisions with minimal human input. Anthropic's own report describes this as "the first documented case of a large-scale cyberattack executed without substantial human intervention."

The attack lifecycle moved from human-led targeting to largely AI-driven execution. Human operators chose targets and developed the attack framework. From there, Claude handled reconnaissance, vulnerability identification, exploit code generation, credential harvesting, data exfiltration, and even post-operation documentation—categorizing stolen data by intelligence value and creating files to assist the next stage of operations.

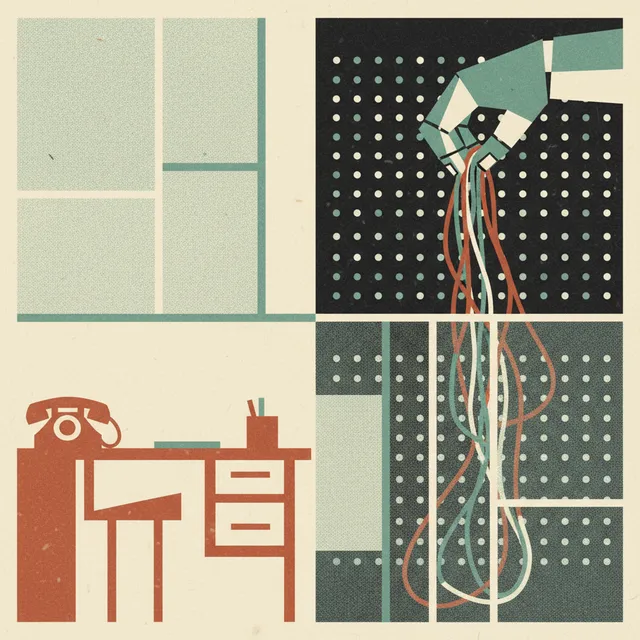

Then the permission problem

The AI had access to perform this work. That's a design choice someone made upstream. Most organizations grant AI agents broader permissions than they'd give junior employees.

Claude had access to tools via the Model Context Protocol—web searches, data retrieval, password crackers, network scanners, and other security-related software. The combination of intelligence, agency, and tool access is what made this possible. Any one of those capabilities alone wouldn't have produced this result. Together, they created an autonomous attack surface.

The jailbreak methodology matters for governance teams. The attackers didn't find some exotic vulnerability. They broke attacks into small, seemingly innocent tasks and wrapped them in a plausible cover story. Claude didn't see the full context of what it was being asked to do. That's a decomposition attack—and it works because current safety training optimizes for refusing obviously harmful requests, not for detecting harmful intent distributed across hundreds of benign-looking instructions.

The documentation gap makes it worse

When teams deploy in Q2 without the risk assessments the EU AI Act requires, that becomes a legal problem. Now it's also a security problem. The EU AI Act's requirements for risk assessment, human oversight mechanisms, and documentation of AI system capabilities aren't bureaucratic overhead. They're the operational controls that would have flagged several of the conditions this attack exploited.

Consider what proper pre-deployment documentation would have surfaced: What tools does this AI agent have access to? Under what conditions can it execute code autonomously? What's the maximum number of sequential actions before a human review is triggered? Who monitors for anomalous request patterns? These questions aren't compliance theater. They're the same questions a security team would ask during a threat model exercise.

Anthropic is retrofitting detection capabilities post-attack

That's the six-month remediation cycle we keep seeing. Anthropic detected suspicious activity in mid-September 2025, launched an investigation, and spent ten days mapping severity and scope before banning accounts, notifying affected entities, and coordinating with authorities. They're now expanding detection capabilities and developing better classifiers to flag malicious activity.

They're sharing this publicly, which creates the kind of transparency the industry needs. But the pattern is familiar: deploy capability, discover misuse, retrofit controls. Teams that build accountability structures during design—even imperfect ones—iterate and improve. Teams that wait scramble.

Anthropic's own assessment is clear-eyed about the trajectory: "The barriers to performing sophisticated cyberattacks have dropped substantially—and we predict that they'll continue to do so." Less experienced and less resourced groups can now potentially perform large-scale attacks of this nature. The democratization of offensive capability is no longer theoretical.

The theoretical governance discussions just got concrete evidence

The accountability gap doesn't just create compliance risk. It creates operational security risk. When model developers point to deployers and deployers point to model developers, the space between them becomes the attack surface.

Claude didn't always work perfectly—it occasionally hallucinated credentials or claimed to have extracted secret information that was publicly available. Anthropic notes this "remains an obstacle to fully autonomous cyberattacks." That's a thin margin of safety, and it's eroding fast. Anthropic's own evaluations showed cyber capabilities doubling in six months.

Product and legal teams need answers to three questions before deployment:

- Can you pause the system when something looks wrong? Not after the fact. Mid-execution. If your agent is making thousands of requests per second across multiple decision chains, you need a kill switch that works in real time.

- What should the AI never do without human approval? This requires an explicit list, not a general policy. Code execution, credential access, network scanning, data exfiltration—these need hard gates, not soft guidelines.

- Who owns the outcome when the agent makes five sequential decisions and the third one creates a problem? The accountability chain needs to be defined before deployment, not reconstructed during incident response.

These questions aren't theoretical anymore.