Operationalizing NIST AI Risk Model Framework: Beyond Accuracy and Checklists

The NIST framework provides the map, but fostering a true culture of responsibility is the journey.

Artificial intelligence is advancing at a dizzying pace, and with it comes a growing sense that its risks are too complex and abstract to manage effectively. We hear about bias, hallucinations, and unforeseen consequences, but finding practical guidance feels like searching for a needle in a haystack of technical jargon and high-level principles.

Enter the National Institute of Standards and Technology (NIST) AI Risk Management Framework (RMF). While it might sound like a dry government document, it’s a source of remarkably clear, practical, and sometimes surprising wisdom. It’s not about rigid rules but about building a new kind of organizational muscle for responsible innovation. This article distills five of the most impactful and counter-intuitive takeaways from the framework for anyone interested in building, deploying, or using AI responsibly.

Five Impactful Takeaways from the NIST AI RMF

It’s Not a Lawbook, It’s a Culture-Building Guide

One of the first things to understand about the NIST AI RMF is what it isn't. It is a voluntary, non-regulatory framework. Its purpose is not to create a rigid checklist for compliance teams to tick off. Instead, its primary goal is to help organizations foster an internal culture of responsible AI practice, anchored in a "rights-preserving approach" that puts the protection of individuals at the forefront.

This is a significant distinction. It frames AI safety as a continuous, proactive cultural practice rather than a reactive task to avoid legal trouble. Developed through consensus among over 240 organizations from Private Industry, Civil Society, Academia, and federal, state, and local government agencies, the framework is designed to be a shared guide for a journey, not a set of commandments handed down from on high. It treats responsible AI as a foundational element of how an organization operates, thinks, and builds.

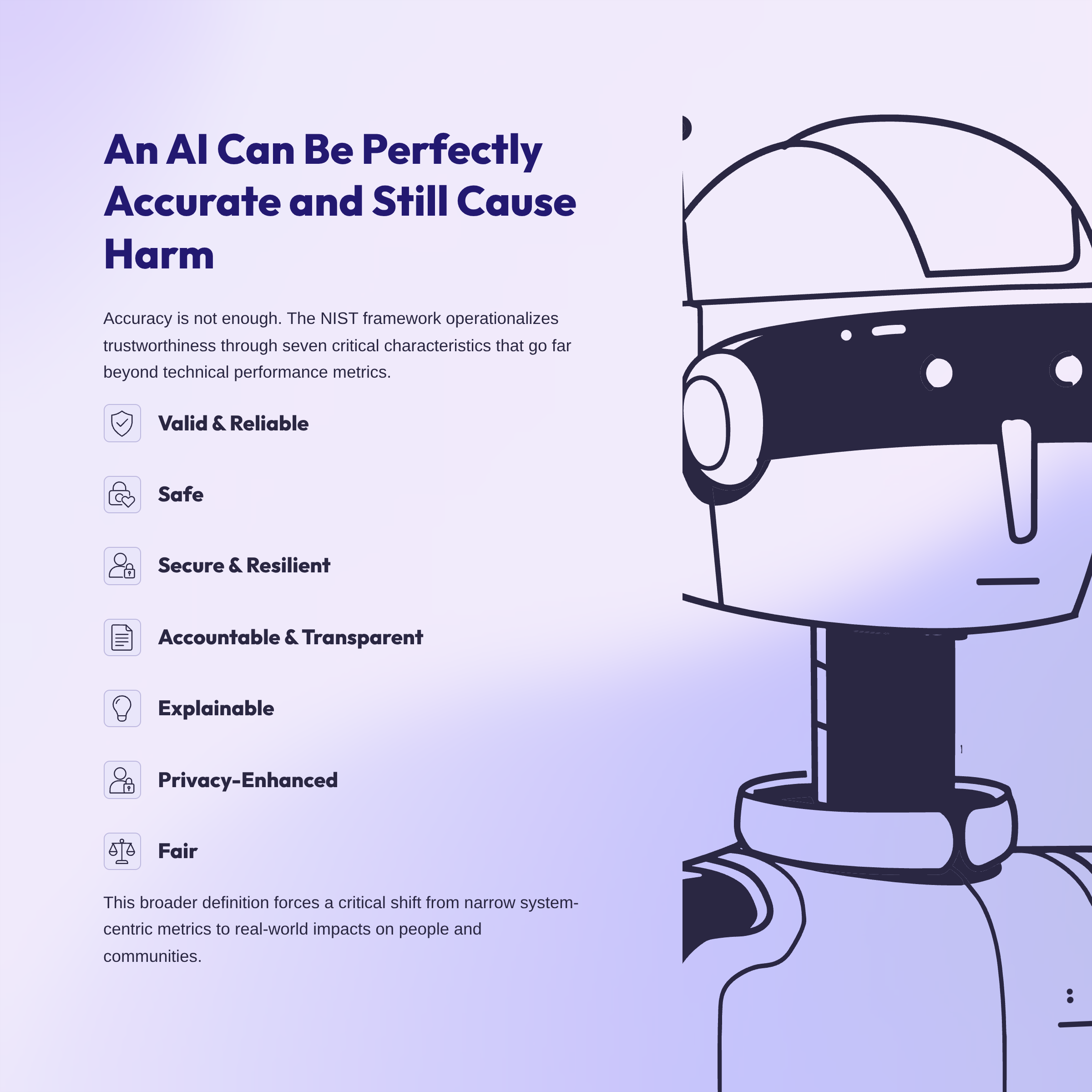

An AI Can Be Perfectly Accurate and Still Cause Harm

In the world of machine learning, accuracy is often treated as the ultimate measure of success. But the NIST framework makes a crucial point: trustworthiness is about much more than just getting the right answer. An AI system can perform its technical task with perfect accuracy and still cause significant real-world harm.

To address this, NIST operationalizes trustworthiness through seven key characteristics: valid and reliable, safe, secure and resilient, accountable and transparent, explainable and interpretable, privacy-enhanced, and fair with harmful bias managed. This broader definition is a cornerstone of the framework's philosophy.

Systems can perform accurately and also be harmful. This is why we operationalize seven trustworthy characteristics... this is bigger than accuracy.

This insight is critical because it forces a shift in focus. It moves the evaluation of an AI system from narrow, system-centric metrics (like accuracy) to a wider, socio-technical perspective that considers its real-world impacts on people and communities within specific contexts.

To Manage AI, You Must First Avoid Defining It

Here is a truly counter-intuitive move from a standards organization: the NIST AI RMF deliberately does not define "AI." The framework's architects recognized that attempting to create a single, enduring definition of artificial intelligence would be a "very risky... controversial Endeavor," bogging them down in endless philosophical debates.

Instead, they took a more practical approach. The framework focuses on defining "AI systems" based on what they do—specifically, systems that "generate any output such as predictions, recommendations or decisions." This clever sidestep makes the framework incredibly resilient and practical. It avoids academic arguments and grounds the guidance in the tangible technologies that people are actually building and deploying today. This approach ensures the framework remains relevant as the technology evolves and squarely includes modern tools like generative AI within its scope.

"Risk" Isn't Just a Four-Letter Word

When most people hear "risk management," they think exclusively about preventing bad things from happening. The NIST framework offers a more balanced and strategic definition of risk: "an events probability of occurring and the magnitude or degree of consequences should that event occur."

The key insight here is that these consequences can be positive or negative, resulting in either opportunities or threats. This reframes risk management from a purely defensive activity into a strategic advantage. It gives organizations a formal language to pursue ambitious AI goals and the "amazing things that AI can do" responsibly, rather than letting a purely defensive risk posture stifle innovation. It's about making conscious, informed decisions on both sides of the risk-reward equation.

AI Safety is Everyone's Job—Not Just the Coders' or Lawyers'

A common mistake is to silo AI risk in the legal department or confine it to the engineering team. The framework strongly pushes back on this, emphasizing that risk management is a shared responsibility across the entire AI lifecycle. It introduces the concept of "AI Actors" to describe the wide range of people involved.

This includes the obvious roles like designers and developers, but also system integrators, end-users, civil society organizations, and researchers. The rationale is simple but powerful: different actors have different risk perspectives. As the speaker highlighted, "an AI developer who makes a generative AI model available can have a different risk perspective than the person who's responsible for deploying it." Bringing these multidisciplinary perspectives together is essential for identifying the unforeseen risks—the "unknown unknowns"—that no single team could anticipate alone.

From Principles to Practice

Taken together, these takeaways reveal that effective AI risk management is a holistic, socio-technical endeavor. It requires cultivating a rights-preserving culture where diverse AI Actors share responsibility, shifting the focus from mere accuracy to broad trustworthiness, and treating risk not just as a threat to be mitigated, but as an opportunity to be managed. It extends far beyond technical metrics, legal checklists, and the code itself.

The NIST framework provides the map, but fostering a true culture of responsibility is the journey. It prompts a critical question for all of us involved in technology. What is the first step your organization—or even you as an individual—can take to start building it?