Not all AI agents carry the same legal risk

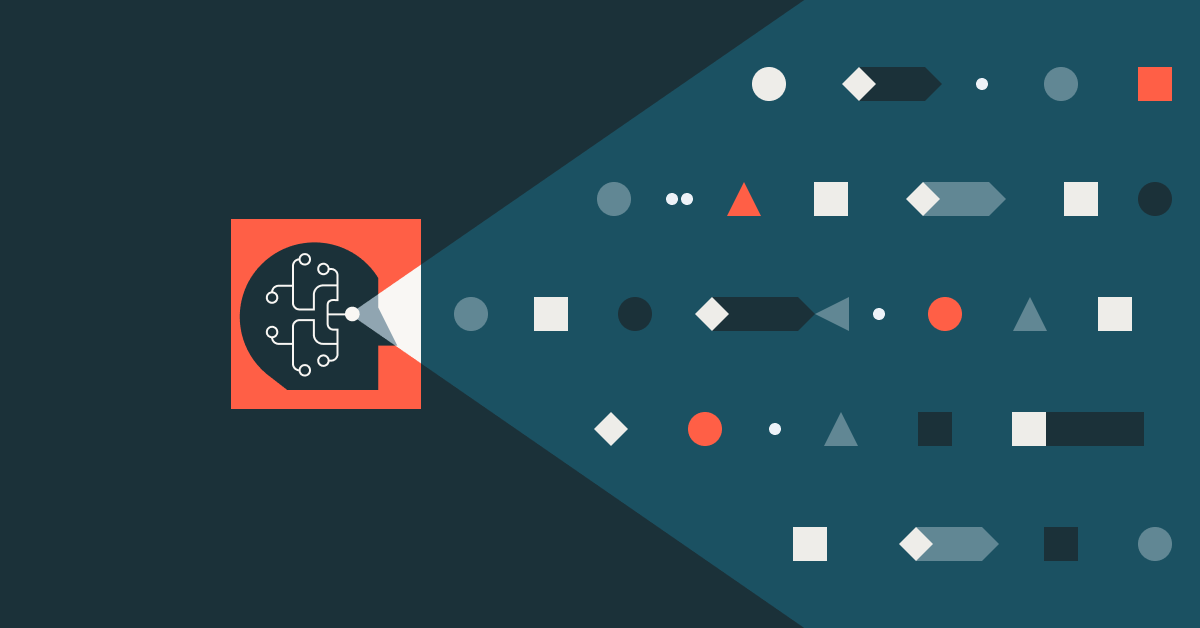

Not all AI agents carry the same legal risk. Your governance framework should distinguish between reflex agents, learning agents, and multi-agent systems — because the liability profile is fundamentally different. https://www.databricks.com/blog/types-ai-agents-definitions-roles-and-examples

There's a growing problem in AI governance: we talk about "agents" as if they're one thing. They're not. And the legal risk profile of a simple reflex agent that follows if-then rules is fundamentally different from a learning agent that adapts its behavior based on outcomes it observes in production.

Databricks recently published a useful taxonomy breaking AI agents into distinct categories — simple reflex, model-based reflex, goal-based, utility-based, learning, hierarchical, and multi-agent systems. It's written for a technical audience, but the implications for legal and product teams are significant and largely unaddressed.

Why the distinction matters for governance

Most enterprise AI policies I've seen treat "AI agent" as a single category. You get one risk assessment template, one approval workflow, one oversight protocol. That made sense when the only AI in production was a chatbot following a script. It doesn't make sense now.

Here's why: a simple reflex agent operates on predefined condition-action rules. It doesn't learn. It doesn't adapt. It doesn't remember previous interactions. The legal risk profile is relatively contained — if something goes wrong, you trace it back to the rule set. Liability analysis is straightforward.

A model-based reflex agent maintains an internal representation of its environment. It's making inferences about things it can't directly observe. That's a different oversight challenge. When the agent's internal model diverges from reality, its outputs diverge too — and the failure mode isn't as simple as a bad rule.

Now move to goal-based agents. These evaluate multiple possible action paths against a defined objective. They're making planning decisions. The question shifts from "did it follow the rule" to "was the goal properly specified, and were the paths it considered acceptable?" For product counsel, that means the specification itself becomes a liability surface.

Utility-based agents add another layer: they're not just pursuing a goal, they're optimizing across competing priorities using a utility function. Who defined that function? What trade-offs did it encode? If the agent optimizes for speed over accuracy in a context where accuracy matters — say, benefits determination or credit decisioning — the utility function is where the legal exposure lives.

Learning agents are where the governance challenge intensifies. These agents modify their own behavior based on experience. The agent you approved in a risk assessment six months ago may not be the same agent running today. That's not a hypothetical — it's how learning agents work by design. Which means static, point-in-time risk assessments are structurally inadequate for this category.

And then there are hierarchical and multi-agent systems, where multiple agents coordinate, delegate, and operate at different levels of abstraction. Accountability gets distributed across the system. When a top-level agent delegates a task to a sub-agent that produces a harmful output, where does responsibility land? The orchestrator? The sub-agent? The team that designed the delegation logic? These are live questions without settled answers.

What this means for product counsel

The practical takeaway is that your AI governance framework needs to be agent-type-aware. That means:

Tiered risk assessment. A simple reflex agent doesn't need the same review depth as a learning agent with production autonomy. Over-indexing on low-risk deployments wastes resources. Under-indexing on high-risk ones creates exposure. Match the rigor to the architecture.

Dynamic monitoring for learning agents. If the agent changes, your risk assessment needs to account for drift. That means continuous monitoring protocols, not annual reviews. Build behavioral baselines and flag deviations.

Specification review for goal-based and utility-based agents. The goal definition and utility function are legal artifacts, whether your team treats them that way or not. Product counsel should be reviewing what objectives and trade-offs are encoded before deployment.

Accountability mapping for multi-agent systems. Before you deploy a hierarchical or multi-agent architecture, map who is accountable for decisions at each level. If you can't answer that question clearly, you're not ready to deploy.

Documentation that tracks agent type. Your AI inventory should classify each deployment by agent architecture. When regulators ask — and they will — "what kind of AI system is this," you want to answer with precision, not generalities.

The regulatory trajectory confirms this

The EU AI Act already differentiates risk levels based on use case. But the next wave of regulatory refinement is going to get more granular about how the system works, not just where it's deployed. An agent that learns and adapts in a high-risk context is a different regulatory object than one that follows static rules in the same context. Frameworks like NIST's AI RMF are already moving in this direction, emphasizing that governance should be proportionate to the actual capabilities and autonomy of the system.

Product counsel who build agent-type distinctions into their governance frameworks now won't have to retrofit later. Those who treat all agents the same will find their policies increasingly disconnected from both the technology and the regulatory landscape.

The Databricks taxonomy is a solid technical reference. The legal and governance layer on top of it is where the real work begins.