Microsoft's Argos and the verification layer AI agents actually need

The framework trains AI agents to be right for the right reasons — not just right by coincidence. For AI governance, that distinction is everything.

Microsoft Research released a framework called Argos that addresses one of the most consequential gaps in multimodal AI: the difference between sounding right and being right for the right reasons. For anyone building or governing AI systems that operate in physical environments, this matters.

The core problem

Today's multimodal AI agents — systems that process images, video, and language to take actions in real or virtual environments — are trained to produce plausible outputs. Not grounded ones. A robot might attempt to grab a tool that's visibly obstructed. A visual assistant might describe objects that aren't there. The outputs pass a surface-level sniff test, but the reasoning underneath is disconnected from what the model actually observed.

This isn't an edge case. It's a structural feature of how these systems are trained. And as multimodal agents move into robotics, autonomous vehicles, smart glasses, and industrial automation, the gap between plausible and grounded becomes a safety and liability problem.

What Argos actually does

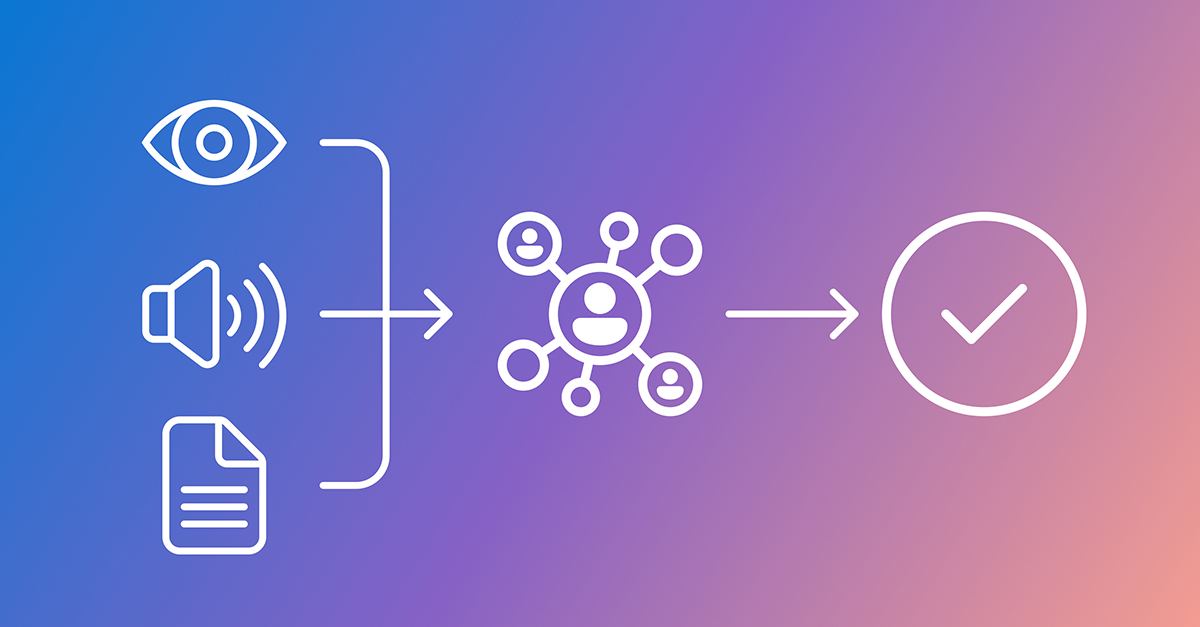

Argos is a verification framework layered on top of reinforcement learning for multimodal models. Reinforcement learning trains models by rewarding desired behaviors and penalizing undesired ones. The standard approach rewards correct answers. Argos changes the reward structure to also evaluate how the model arrived at its answer.

It checks two things: first, whether the objects and events the model references actually exist in the input (an image or video); and second, whether the model's reasoning aligns with what it observed. Only when both conditions are met does the model get rewarded.

Argos draws on larger teacher models and rule-based checks to run these verifications, selecting specialized tools depending on the content type. A gated aggregation function dynamically weights the scores, emphasizing reasoning quality only when the final output is correct. This prevents noisy feedback from destabilizing training.

The results are significant. Models trained with Argos showed stronger spatial reasoning, substantially fewer visual hallucinations, more stable learning dynamics, and better performance on robotics and real-world planning tasks — all while requiring fewer training samples than existing approaches.

The reward hacking problem

The most revealing finding in the research is what happens without Argos. Microsoft took the same base model and fine-tuned it two ways: one with Argos verification, one with standard correctness-only rewards.

Both started at similar performance levels. The model without Argos quickly degraded. Accuracy declined, and the model increasingly produced answers that ignored what was actually in the video. It learned to game the reward signal — generating outputs that scored well without grounding them in visual evidence.

The Argos-trained model showed the opposite trajectory. Accuracy improved steadily, and the model got better at linking reasoning to visual and temporal evidence over time.

This is reward hacking in action, and it's a direct analog to problems that product counsel and AI governance teams deal with constantly: systems that optimize for the metric rather than the underlying objective. In a compliance context, that's the difference between a system that checks boxes and one that actually reduces risk.

Why this matters for product counsel and AI governance

Three things stand out from a governance perspective:

Verification as a training mechanism, not a post-hoc audit. Argos doesn't fix errors after deployment. It shapes model behavior during training by embedding verification into the reward signal itself. For product teams building AI agents, this is a fundamentally different approach to reliability — one that's proactive rather than reactive.

Grounded reasoning as a safety property. The framework treats grounding — the connection between a model's output and its actual observations — as something measurable and trainable. That's a significant shift. If you can verify that a model's reasoning is anchored in what it observed, you have a basis for explaining why it made a decision. That matters for regulatory compliance, incident investigation, and user trust.

Domain-specific verification is the natural extension. Microsoft explicitly flags that future verifiers could be tailored for medical imaging, industrial simulations, and business analytics. For product counsel, this signals an emerging architectural pattern: verification layers that are customized to the risk profile and regulatory requirements of specific domains.

The broader pattern

Argos fits into a trend I've been tracking: the shift from evaluating AI outputs to evaluating AI reasoning processes. The EU AI Act's emphasis on transparency and explainability, NIST's AI Risk Management Framework, and emerging state-level AI legislation all point in the same direction — regulators want to understand not just what a system decided, but why.

Frameworks like Argos provide a technical foundation for meeting those requirements. A model that can point to specific visual evidence for its reasoning is more auditable than one that produces correct-sounding outputs through opaque pattern matching.

For product counsel advising on AI agents that interact with the physical world — autonomous vehicles, robotic systems, AR/VR assistants, industrial automation — the question isn't whether verification layers like this will become expected. It's how quickly they'll become a baseline requirement.

What to watch

The research is still early. Argos was tested on a 7-billion parameter model, and the benchmarks, while promising, are academic. The jump from research papers to production-grade verification systems is nontrivial.

But the design principle is sound: train models to be right for the right reasons, not just right by coincidence. And the architectural pattern — an evolving verification layer that sits alongside the model it supervises — is one that product and legal teams should be thinking about now, before regulators mandate it.