Memory-driven AI agents create governance problems, not just engineering ones

AI agents with memory aren't just smarter — they're harder to govern. Each memory layer creates distinct privacy and retention obligations product counsel needs to address at the architecture stage.

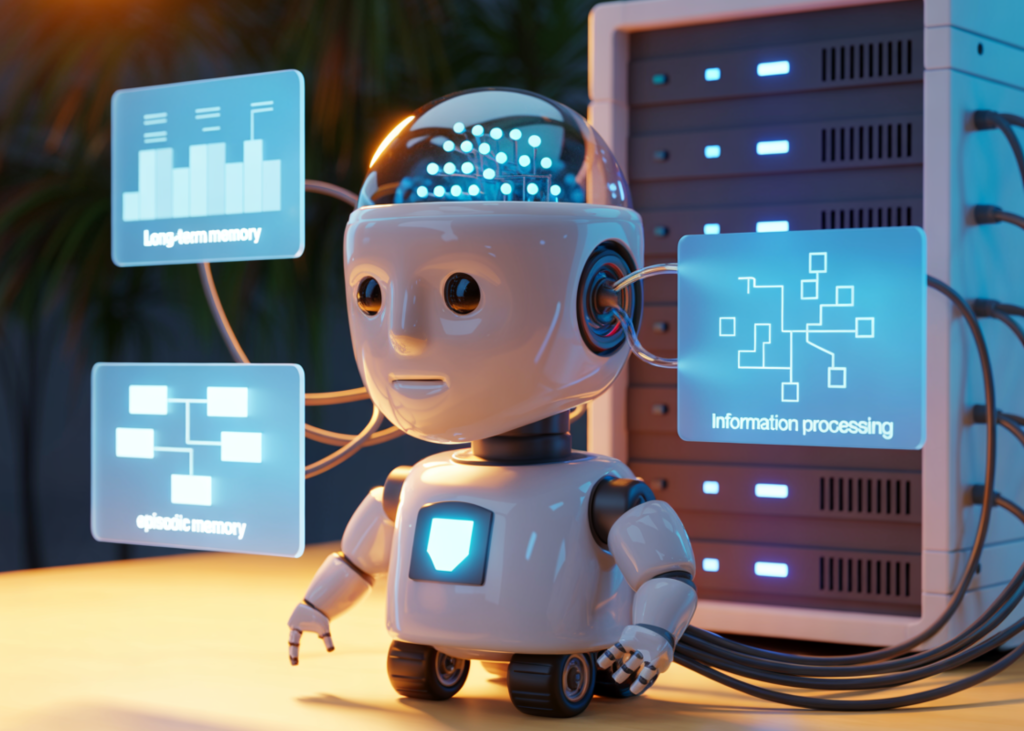

There's a useful technical walkthrough from MarkTechPost on how to build memory-driven AI agents with short-term, long-term, and episodic memory. It's aimed at developers, and it does a solid job explaining the architecture. But reading it through a product counsel lens, what jumps out isn't the engineering — it's the governance surface area that each memory layer creates.

Three memory types, three distinct risk profiles

The article breaks AI agent memory into three categories:

Short-term memory holds the current conversation context. From a legal standpoint, this is the most familiar — it's session data, and most privacy frameworks handle it reasonably well. It's ephemeral by design, which limits exposure.

Long-term memory is where things get interesting. This is persistent knowledge the agent retains across sessions — user preferences, facts about an organization, accumulated context. The moment you persist data across sessions, you've built a data store. That means retention policies, deletion obligations, and — depending on jurisdiction — potentially a new processing purpose under GDPR or similar frameworks. Product teams often treat long-term memory as a feature. Product counsel should treat it as a data lifecycle event.

Episodic memory is the most legally complex. This is the agent recalling what happened in prior interactions — not just facts, but sequences of events, decisions made, and outcomes observed. In practice, episodic memory means the agent is building a behavioral record. For product counsel, that raises immediate questions: Is this profiling under the GDPR? Does the user know the agent is learning from their interaction patterns? Can the user access or delete specific episodes? What happens when episodic memory captures information about third parties who never consented to being remembered?

The real issue: memory as an emergent data architecture

Most organizations have spent years building data governance around databases, data lakes, and defined storage systems. Memory-driven AI agents sidestep all of that. The agent's memory isn't a database anyone provisioned or a table anyone designed — it's an emergent data store that grows organically through use.

Which means the standard governance playbook doesn't apply cleanly. There's no schema to audit. There's no predefined retention schedule. The "data" in an agent's memory might be a compressed embedding that doesn't map neatly to a single data subject's record. Try fulfilling a DSAR against an episodic memory layer and you'll see the problem immediately.

For product counsel advising on agentic AI, the architectural conversation needs to happen before the memory system is built, not after. The questions that matter:

- What gets remembered, and why? Every memory type needs a defined purpose. "It makes the agent smarter" isn't a lawful basis.

- Who controls the memory? If the agent serves multiple users, whose memories take priority? Can one user's deletion request affect another user's experience?

- How do you audit memory? If you can't inspect what the agent remembers, you can't govern it. Observability isn't optional.

- What's the retention policy for each memory tier? Short-term, long-term, and episodic memory should have different lifecycles, and those lifecycles should be documented before launch.

Where this is headed

The MarkTechPost article is a good technical primer, and every product counsel working on AI agents should read it — not to learn how to code memory systems, but to understand the architectural decisions that will land on your desk as governance questions six months from now.

Memory-driven agents are going to become the norm. The agents that just respond to a single prompt without context will feel like dial-up compared to agents that remember your preferences, learn from past interactions, and adapt over time. That's a genuinely better user experience. It's also a genuinely harder governance problem.

The organizations that get this right will be the ones where product counsel is in the room when the memory architecture is designed — not reviewing it in a privacy impact assessment after it's already in production.