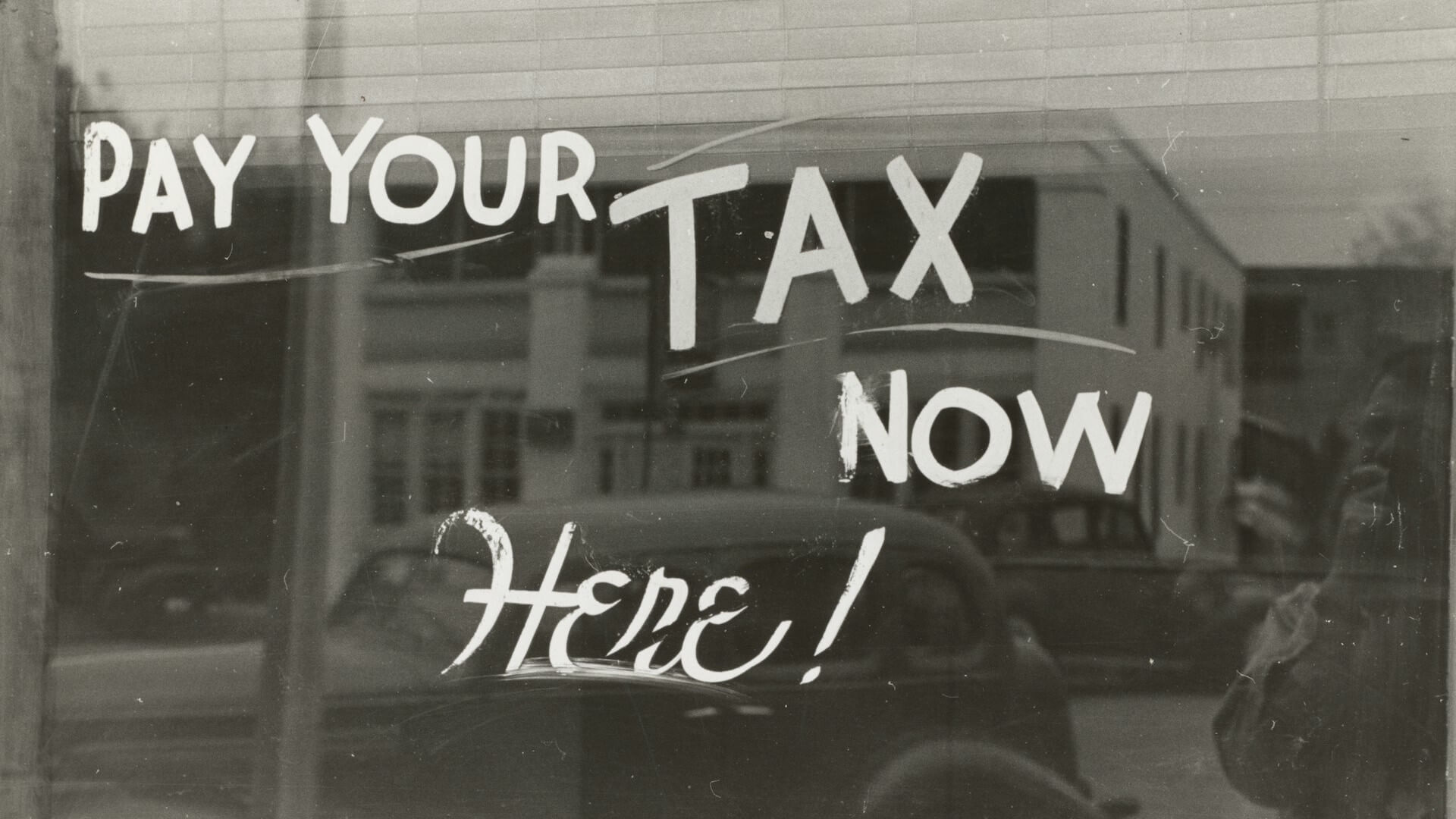

AI agents demand upfront reliability investment to avoid operational tax

AI agents need reliability built into their architecture from day one to avoid the ongoing "reliability tax" of operational breakdowns, legal exposure, and reputational damage from unreliable autonomous systems.

AI agents require upfront reliability investments to prevent operational costs. Salesforce's Mohith Shrivastava warns that unreliable AI agents generate a "reliability tax"—ongoing operational, legal, and reputational costs organizations face when deploying agentic AI without proper safeguards. Writing for The New Stack, he explains how the industry has shifted from deterministic automation to probabilistic autonomy, introducing new failure modes that need architectural adjustments.

Shrivastava identifies five pillars of reliability: predictability, fidelity, controllability, robustness, and safety. He contends that context engineering—meticulously designing the entire context, including prompts, tools, and constraints—has more significance than simple prompt engineering for ensuring reliable agent behavior. This approach incorporates zero trust controls, human-in-the-loop checkpoints, and thorough monitoring.

Does this indicate a shift in how we perceive AI deployment risk? Instead of viewing reliability as a concern only after deployment, it becomes a core architectural principle. This involves integrating governance and risk controls during the design phase, rather than adding them after issues arise. The cost of reliability then becomes a predictable infrastructure expense rather than an emergency response budget.